Lojban Wave Lessons/Single page

Made by la klaku with help from various Lojbanists. Based on the work of la .kribacr. Spring 2013.

Foreword

These lessons are an attempt to expand on the Lessons originally started in Google Wave network, an excellent Lojban tutorial authored by la .kribacr., la xalbo, and transcribed by Marenz (which were parallel to lessons 1-4 of this tutorial). It explains newer rules of Lojban not covered by older materials such as "What is Lojban?" and "Lojban for Beginners".

If you are new to Lojban, I recommend listening to any recordings you can find of spoken Lojban (readings, music, conversation) both before and while you are taking this tutorial, in order to make yourself familiar with the sounds and words of the language. Furthermore, try to say the things you read in Lojban accent if it's reasonably practical. This can help your pronunciation a lot.

When taking this tutorial, it's best to pause between lessons in order to internalize what you have learnt. I have attempted to build these lessons from the bottom up and exclude any words or concepts that have not been explained in previous lessons. Once explained, they are used freely throughout the remainder of the tutorial. I urge readers not to pass any misunderstood content; if you have questions or are uncertain about something, feel free to ask the Lojban community, which can be found in Lojban Live Chat or on Google Groups. They will be happy to help.

In this tutorial, Lojban text is written in bold. Later, when Lojban loanwords are used in English sentences, they are not marked. Answers to exercises are displayed as a grey bar. Highlight the text in order to see it. English terms are written in italic.

Lastly, I have as far as possible attempted to use the Lojban words for grammatical constructs: sumka'i instead of pro-sumti, sumtcita instead of modal and jufra instead of utterance. This is because I feel the English words are often either arbitrary, in which case they are just more words to learn, or misleading, in which case they are worse than useless. In either case, as long as the words are specific to those who are learning Lojban anyway, there is no reason for them to exist as separate English words.

Lesson 0: Sounds

While you are (hopefully) eager to get started on the inner workings of Lojban grammar, a short lesson on the sounds and writing conventions of the language is beneficial. Learning a language only by reading is hard, and it's not easier if your internal voice is mispronouncing it.

For more details on vowels and consonants sounds used in Lojban, click on the letters described. They are pointing to Wikipedia articles which describe the sound and usually have an audio record of it.

Vowels

There are five proper vowels in Lojban and one almost-vowel. First the proper ones:

| a | as in "father" or "large" |

|---|---|

| e | as in "get" or "gem" |

| i | as in "machine" or "scream" (not as in "hit") |

| o | as in "bold" or "more" — not as in "so" (this should be a 'pure' sound.) |

| u | as in "rude" or "due" (not as in "but") |

| y | as "a" in "Tina" (not as in "but") |

These are pretty much the same as vowels in Italian or Spanish. The sixth (almost-)vowel, y, is called a "schwa" in the language trade, and is pronounced as "comma", "taken" or "surprise!". It's the sound that comes out when the mouth is completely relaxed.

Two vowels together are pronounced as one sound (and called a "diphthong"). Some examples are:

| ai | as in "high" |

|---|---|

| au | as in "how" |

| ei | as in "hey" |

| oi | as in "boy" |

| ia | as in "yacht" |

| ie | as in "yes" |

| iu | as in "you" |

| ua | as in "wander" |

| ue | as in "wet" |

| uo | as in "woke" |

| ui | as in "we" |

i and u act as semivowels when they precede another vowel. Double vowels are rare. The only examples are ii, which is pronounced like English "ye" (as in Oh come all ye faithful) or Chinese "yi", and uu, pronounced like "woo".

Note that because of variation between English dialects, approximations like these will necessarily be wrong for some speakers. The surest way to learn the phonology of the language is to imitate those who already speak it!

Consonants

There are seventeen consonants in Lojban and one almost-consonant. The Lojban consonants are the same as the English, except that Lojban doesn't use the letters H, Q or W. Most of the consonants are pronounced like in English, but there are some exceptions:

| g always "g" as in "gum", never "g" as in "gem" |

| c "sh", as in "ship" |

| j as in "measure" or French "bonjour" |

| x as in the exclamation "Ach!", or in German "Bach", Spanish "Jose" or Arabic "Khaled" |

The almost-consonant is the apostrophe This letter is pronounced like the English letter H, but is only used between two vowels to prevent them from running into each other. Thus ui is normally pronounced "we", but u'i is "oohee". Groups of cmavo are called selma'o and are written using capital letters; but unexpectedly the capital version of this letter is h not H (ko'a is KOhA).

The combination dj is like the j in "joke". The combination tc is like the ch in "chat".

The following sound as they do in English, with the exception that r is often rolled (but does not have to be):

b, d, f, k, l, m, n, p, r, s, t, v, z.

Letter names

Speaking of the letters, what are the names for them? For example, when reciting the alphabet in English the letter C is pronounced "see". This is rather different than the sound the letter makes when used in a word. How about Lojban? Well, consonants are straightforward: The name of a consonant letter is the sound of that letter, plus y. So the consonant letters of Lojban, "b, c, d, f, g ...", are called by cy dy fy gy in Lojban. The almost-consonant ' is called .y'y (pronounced like an agreeing uh-huh but without the stress).

Vowels are handled by following the vowel sound with the word bu, which signifies we're speaking about a symbol. So the vowels of Lojban are: .abu .ebu .ibu .obu .ubu and .ybu.

Lastly you should know that stress is placed on the second-to-last syllable in words with more than one syllable, ignoring syllables containing y, and that one-syllable words are not stressed.

Correct pronunciation

You don't have to be very precise about Lojban pronunciation, because the phonemes are distributed so that it is hard to mistake one sound for another. This means that rather than one 'correct' pronunciation, there is a range of acceptable pronunciation—the general principle is that anything is OK so long as it doesn't sound too much like something else. For example, Lojban r can be pronounced like the "R" in English, Scottish or French.

Two things to be careful of, though, are pronouncing Lojban i and u like Standard British English "hit" and "but" (Northern English "but" is fine!). This is because non-Lojban vowels, particularly these two, are used to separate consonants by people who find them hard to say. For example, if you have problems spitting out the zd in zdani (house), you can say zɪdani — where the ɪ is very short, but the final i has to be long.

Writing Lojban

As you have already seen, Lojban uses the Latin alphabet, though various Lojbanists have suggested different, usually self-designed ones. Furthermore, Lojban almost always uses lower-case letters. Capital letters are only used to mark stress in proper names, but people tend to avoid them even in names.

Apart from the letters, some punctuation is used:

A full stop (period) is a glottal stop (the consonant in the middle of "uh-oh") or a short pause. The rules of Lojban make it easier for one word to run into another when the second word begins with a vowel; so any word starting with a vowel conventionally has a full stop placed in front of it. Full stops are not usually used to end sentences.

Commas are rare in Lojban. They are only used to emphasize boundaries between syllables, and adding or removing commas never changes a word's pronunciation or meaning.

The following are found writing styles of different Lojbanists, but they are not conventional:

Spaces are usually used between words. They are mandatory between some words (more on that in lesson thirteen). Double or triple space is sometimes used before the beginning of new sentences. This is to clearly mark sentence shift visually. This might compensate for lack of capital letters which are used for the same purpose in English.

In òrder to visuàlly reprèsent the stress on the penultìmate syllàble, and bècause they find it visuàlly plèasing, some pèople use grave or acute àccents òver the vòwel of those syllàbles.

Some people borrow other punctuation marks from English, even though they are not canon, and Lojban is equipped with actual words which should compensate for any punctuation one might want to use. Nonetheless, question marks, for example, clearly marks a sentence as a question and is much easier to catch with the eye than any word is, and so some Lojbanists use them. Quotation marks, parenthesis and exclamations marks can be used similarly. While this is not ungrammatical, since that doesn't interfere with the sentences, some people think exotic punctuation creates an unwanted difference between written and spoken Lojban, generally a big no-no.

Lojbanized foreign proper names (cmevla)

The following names are Lojbanized - their sounds are transcribed into Lojban and their ending sound have been changed to a consonant. The final consonant is necessary, because that's how foreign names are differentiated from Lojban words. Again, more on that in lesson thirteen.

Exercise 1

Where are these places?

- .nuIORK.

- .romas.

- .xavanas.

- .kardif.

- .beidjin.

- .ANkaras.

- .ALbekerkis.

- .vankuver.

- .keiptaun.

- .taibeis.

- .bon.

- .delis.

- .nis.

- .atinas.

- .lidz.

- .xelsinkis.

Answer:

- New York: USA

- Rome: Italy

- Havana: Cuba

- Cardiff: Wales (The Welsh for "Cardiff" is "Caerdydd", which would Lojbanise to something like kairdyd..)

- Beijing: China

- Ankara: Turkey

- Albequerque: New Mexico, USA

- Vancouver: Canada

- Cape Town: South Africa

- Taipei: Taiwan (note b, not p. Although actually, the b in Pinyin is pronounced as a p... But this isn't meant to be a course on Mandarin!)

- Bonn: Germany

- Delhi: India (The Hindi for "Delhi" is "Dillî", which would give dilis. or dili'is..)

- Nice: France

- Athens: Greece ("Athina" in Greek)

- Leeds: England

- Helsinki: Finland

Exercise 2

Lojbanise the following names.

There are usually alternative spellings for names, either because people pronounce the originals differently, or because the exact sound doesn't exist in Lojban, so you need to choose between two Lojban letters. This doesn't matter, so long as everyone knows who or where you're talking about.

- John

- Melissa

- Amanda

- Matthew

- Michael

- David Bowie

- Jane Austen

- William Shakespeare

- Sigourney Weaver

- Richard Nixon

- Istanbul

- Madrid

- Tokyo

- San Salvador

Answer:

- .djon. (or .djan. with some accents)

- .melisys.

- .amandys. (again, depending on your accent, the final y may be a, the initial a may be y, and the middle a may be e.)

- .matiius.

- .maikyl. or .maikl. , depending on how you say it.

- .deivyd.bauis. or .bouis.

- .djein.ostin.

- .uiliiam.cekspir.

- .sigornis.uivyr. or .sygornis.uivyr.

- .ritcyrd.niksyn.

- .istanBUL. with English stress, .IStanbul. with American, .istanbul. with Turkish. Lojbanists generally prefer to base cmevla on local pronunciation, but this is not an absolute rule.

- .maDRID.

- .tokiios.

- .san.salvaDOR. (with Spanish stress)

Lessons

Lesson 1: Bridi, jufra, sumti and selbri

Bridi is the most central unit of Lojban utterances. The concept is very close to what we call a proposition in English. A bridi is a claim that some objects stand in a relation to each other, or that an object has some property. This stands in contrast to jufra, which are merely Lojban utterances, which can be bridi or anything else being said. The difference between a bridi and a jufra is that a jufra does not necessarily state anything, while a bridi does. Thus, a bridi might be true or false, while not all jufra can be said to be such.

To have some examples (in English, to begin with), Mozart was the greatest musician of all time is a bridi, because it makes a claim with a truth value, and it involves an object, Mozart, and a property, being the greatest musician of all time. On the contrary, Ow! My toe! is not a bridi, since it does not involve a relation, and thus does not state anything. Both, though, are jufra.

Try to identify the bridi among these English jufra:

- I hate it when you do that.

- Woah, that looks delicious!

- Geez, not again.

- No, I own three cars

- Nineteen minutes past eight.

- This Saturday, yes.

Answer: 1, 2 and 4 are bridi. The rest contain no relation or claim of a property.

Put in Lojban terms, a bridi consists of one selbri, and one or more sumti. The selbri is the relation or claim about the object, and the sumti are the objects which are in a relation. Note that object is not a perfect translation of sumti, since sumti can refer to not just physical objects, but can also purely abstract things like "The idea of warfare". A better translation would be something like "subject, direct or indirect object" for sumti, and main verb for selbri, though, as we will see, this is not optimal either.

We can now write the first important lesson down:

Put another way, a bridi states that some sumti do/are something explained by a selbri.

Identify the sumti and selbri equivalents in these English jufra:

I will pick up my daughters with my car.

Answer: selbri: pick up (with). sumti: I, my daughters, my car

He bought 5 new shirts from Mark for just two hundred euro!

Answer: selbri: bought (from) (for) sumti: He, 5 new shirts, Mark and two hundred euros

Since these concepts are so fundamental to Lojban, let's have a third example:

So far, the EPA has done nothing about the amount of sulphur dioxide.

Answer: selbri: has done (about) sumti: The EPA, nothing and the amount of sulphur dioxide

Now try begin making Lojban bridi. For this you will need to use some words, which can act as selbri:

- dunda = x1 gives x2 to x3 (without payment)

- pelxu = x1 is yellow

- zdani = x1 is a home of x2

Notice that these words meaning give, yellow and home would be considered a verb, an adjective and a noun in English. In Lojban, there are no such categories and no such distinction. dunda can be translated gives (verb), is a giver (noun), is giving (adjective) as well as to an adverb form. They all act as selbri, and are used in the same way.

As well as a few words, which can act as sumti:

- mi = "I" or "we" – the one or those who are speaking

- ti = "this" – a close thing or event nearby which can be pointed to by the speaker

- do = "you" – the one or those who are being spoken to

See the strange translations of the selbri above - especially the x1, x2 and x3? Those are called sumti places. They are places where sumti can go to fill a bridi. Filling a sumti in a place states that the sumti fits in that place. The second place of dunda, for example, x2, is the thing being given. The third is the object which receives the thing. Notice also that the translation of dunda has the word to in it. This is because, while this word is needed in English to signify the receiver, the receiver is in the third sumti place of dunda. So when you fill the third sumti place of dunda, the sumti you fill in is always the receiver, and you don't need an equivalent to the word to!

To say a bridi, you simply say the x1 sumti first, then the selbri, then any other sumti.

Usual bridi: (x1 sumti) (selbri) (x2 sumti) (x3 sumti) (x4 sumti) (x5 sumti) (and so on)

The order can be played around with, but for now, we stick with the usual form. To say I give this to you you just say mi dunda ti do, with the three sumti at the right places.

So, how would you say This is a home of me?

Answer: ti zdani mi

Try a few more in order to get the idea of a place structure sink in.

You give this to me?

Answer: do dunda ti mi

And translate ti pelxu

Answer: This is yellow.

Quite easy once you get the hang of it, right?

Multiple bridi after each other are separated by .i This is the Lojban equivalent of full stop, but it usually goes before bridi instead of after them. It's often left out before the first bridi, though, as in all these examples:

- .i = Sentence separator. Separates any two jufra (and therefore also bridi).

ti zdani mi .i ti pelxu This is a home to me. This is yellow.

Before you move on to the next lesson, I recommend that you take a break for at least seven minutes to let the information sink in.

Lesson 2: Skipping around with FA and zo'e

Most selbri have from one to five sumti places, but some have more. Here is a selbri with four sumti places:

- vecnu = x1 sells x2 to x3 for price x4

If I want to say I sell this, it would be too much to have to fill the sumti places x3 and x4, which specify who I sell the thing to, and for what price. Luckily, I don't need to. sumti places can be filled with zo'e. zo'e indicates to us that the value of the sumti place is unspecified because it's unimportant or can be determined from context.

- zo'e = something; fills a sumti place with something, but does not state what.

So to say I sell to you, I could say

| mi vecnu zo'e do zo'e I sell something to you for some price. |

How would you say: That's a home (for somebody)?

Answer: ti zdani zo'e

How about (someone) gives this to (someone)?

Answer: zo'e dunda ti zo'e

Still, filling out three zo'e just to say that a thing is being sold takes time. Therefore you don't need to write all the zo'e in a bridi. The rule simply is that if you leave out any sumti, they will be considered as if they contained zo'e. If the bridi begins with a selbri, the x1 is presumed to be omitted and it becomes zo'e.

Try it out. What's Lojban for I sell?

Answer: mi vecnu

And what does zdani mi mean?

Answer: Something is a home of me or just I have a home.

As mentioned earlier, the form doesn't have to be {x1 sumti} {selbri} {x2 sumti} {x3 sumti} (ect.) In fact, you can place the selbri anywhere you want, just not at the beginning of the bridi. If you do that, the x1 is considered left out and filled with zo'e instead. So the following three jufra are all the exactly same bridi:

- mi dunda ti do

- mi ti dunda do

- mi ti do dunda

Sometimes this is used for poetic effect. You sell yourself could be do do vecnu, which sounds better than do vecnu do. Or it can be used for clarity if the selbri is very long and therefore better be left at the end of the bridi.

There are also several ways to play around with the order of the sumti inside the bridi. The easiest one is by using the words fa, fe, fi, fo and fu.

- fa = Tags the following sumti as filling x1

- fe = Tags the following sumti as filling x2

- fi = Tags the following sumti as filling x3

- fo = Tags the following sumti as filling x4

- fu = Tags the following sumti as filling x5

Notice that the vowels are the five vowels in the Lojban alphabet in order. Using one of these words marks that the next sumti will fill the x1, x2, x3, x4 and x5 respectively. The next sumti after that will be presumed to fill a slot one greater than the previous. To use an example:

dunda fa do fe ti do – Giving by you of this thing to you. fa marks the x1, the giver, which is you. fe marks the thing being given, the x2. Sumti counting then continues from fe, meaning that the last sumti fills x3, the object receiving.

Attempt to translate the following three sentences:

mi vecnu fo ti fe do

Answer: I sell, for the price of this, you. or I sell you for the price of this (probably pointing to a bunch of money)

zdani fe ti

Answer: This has a home. Here, the fe is redundant.

vecnu zo'e mi ti fa do

Answer: You sell something to me for this price

Lesson 3: tanru and lo

In this lesson, you will become familiar with the concept of a tanru. A tanru is formed when a selbri is put in front of another selbri, modifying its meaning. A tanru is itself a selbri, and can combine with other selbri or tanru to form more complex tanru. Thus zdani vecnu is a tanru, as well as pelxu zdani vecnu, which is made from the tanru pelxu zdani and the single brivla word vecnu. To understand the concept of tanru, consider the English noun combination lemon tree. If you didn't know what a lemon tree was, but had heard about both lemons and trees, you would not be able to deduce what a lemon tree was. Perhaps a lemon-colored tree, or a tree shaped like a lemon, or a tree whose bark tastes like lemon. The only things you could know for sure would be that it would be a tree, and it would be lemon-like in some way.

A tanru is closely analogous to this. It cannot be said exactly what a zdani vecnu is, but it can be said that it is definitely a vecnu, and that it's zdani-like in some way. And it could be zdani-like in any way. In theory, no matter how silly or absurd the connection to zdani was, it could still truly be a zdani vecnu. However, it must actually be a vecnu in the ordinary sense in order for the tanru to apply. You could gloss zdani vecnu as home seller, or even better but worse sounding a home-type-of seller. The place structure of a tanru is always that of the rightmost selbri. It's also said that the left selbri modifies the right selbri.

"Really?", you'd ask, skeptically, "It doesn't matter how silly the connection to the left word in a tanru is, it's still true? So I could call all sellers for zdani vecnu and then make up some silly excuse why I think it's zdani-like?"

Well yes, but then you'd be a dick. Or at least you'd be intentionally misleading. In general, you should use a tanru when it's obvious how the left word relates to the right.

Attempt to translate the following: ti pelxu zdani do

Answer: That is a yellow home for you Again, we don't know in which way it's yellow. Probably it's painted yellow, but we don't know for sure.

mi vecnu dunda

Answer: I sell-like give. What can that mean? No idea. It certainly doesn't mean that you sold something, since, by definition of dunda, there can be no payment involved. It has to be a giveaway, but be sell-like in some aspect.

And now for something completely different. What if I wanted to say I sold to a German?

- dotco = x1 is German/reflects German culture in aspect x2

I can't say mi vecnu zo'e dotco because that would leave two selbri in a bridi, which is not permitted. I could say mi dotco vecnu but that would be unnecessary vague - I could sell in a German way. Likewise, if I want to say I am friends with an American, what should I say?

- pendo = x1 is a friend of x2

- merko = x1 is American/reflect US culture in aspect x2

Again, the obvious would be to say mi pendo merko, but that would form a tanru, meaning I am friend-like American, which is wrong. What we really want to is to take the selbri merko and transform it into a sumti so it can be used in the selbri pendo. This is done by the two words lo and ku.

- lo = generic begin convert selbri to sumti word. Extracts x1 of selbri to use as sumti.

- ku = end convert selbri to sumti process.

You simply place a selbri between these two words, and it takes anything that can fill the x1 of that selbri and turns it into a sumti.

So for instance, the things that can fill zdani's x1 are only things which are homes of somebody. So lo zdani ku means a home or some homes for somebody. Similarly, if I say that something is pelxu, it means it's yellow. So lo pelxu ku refers to something yellow.

Now you have the necessary grammar to be able to say I am friends with an American. How?

Answer: mi pendo lo merko ku

There is a good reason why the ku is necessary. Try to translate A German sells this to me

Answer: lo dotco ku vecnu ti mi If you leave out the ku, you do not get a bridi, but simply three sumti. Since lo…ku cannot convert bridi, the ti is forced outside the sumti, the lo-construct is forced to close and it simply becomes the three sumti of lo dotco vecnu {ku}, ti and mi.

You always have to be careful with jufra like lo zdani ku pelxu. If the ku is left out the conversion process does not end, and it simply becomes one sumti, made from the tanru zdani pelxu and then converted with lo.

Lesson 4: Attitudinals

Another concept which can be unfamiliar to English speakers is that of attitudinals. Attitudinals are words that express emotions directly. They turned out to be incredibly awesome and useful. They all have a so-called free grammar, which means that they can appear almost anywhere within bridi without disrupting the bridi's grammar or any grammatical constructs.

In Lojban grammar, an attitudinal applies to the previous word. If that previous word is a word which begins a construct (like .i or lo), it applies to the entire construct. Likewise, if the attitudinal follows a word which ends a construct like ku, it applies to the ended construct.

Let's have two attitudinals to make some examples:

- ui = attitudinal: simple pure emotion: happiness - unhappiness

- za'a = attitudinal: evidential: I directly observe

Note that in the definition of ui, there are listed two emotions, happiness and unhappiness. This means that ui is defined as happiness, while its negation, means unhappiness. Negation might be the wrong word here. Technically, the other definition of ui is another construct, ui nai. Most of the time, the second definition of attitudinals - the ones suffixed with nai - really is the negation of the bare attitudinal. Other times, not so much.

- nai = misc. negation - attached to attitudinals, it changes the meaning into the attitudinal's "negation"

And some more selbri, just for the heck of it:

- citka = x1 eats x2

- plise = x1 is an apple of strain/type x2

The sentence do citka lo plise ku ui, means You eat an apple, yay! (especially expressing that it is the apple that the speaker is happy about, not the eating, or the fact that it was you.) In the sentence do za'a citka lo plise ku, the speaker directly observes that it is indeed the you, who eats an apple as opposed to someone else.

If an attitudinal is placed at the beginning of the bridi, it is understood to apply to an explicit or implicit .i, thus applying to the entire bridi:

ui za'a do dunda lo plise ku mi – Yay, I observe that you give an/some apple to me!

mi vecnu ui nai lo zdani ku I sell (which sucks!) a home.

Try it out with a few examples. First, though, here are some more attitudinals:

- .u'u = attitudinal: simple pure emotion: guilt - remorselessness - innocence.

- .oi = attitudinal: complex pure emotion: complaint - pleasure.

- iu = attitudinal: miscellaneous pure emotion: love - hate.

Look at that, a word with three emotions in the definition! The middle one is accessed by suffixinng with cu'i. It's considered the midpoint of the emotion.

- cu'i = attitudinal midpoint scalar: attach to attitudinal to change the meaning to the "midpoint" of the emotion.

Try saying I give something to a German, who I love

Answer: mi dunda fi lo dotco ku iu or zo'e instead of fi

Now Aah, I eat a yellow apple

Answer: .oi nai mi citka lo pelxu plise ku

Let's have another attitudinal of a different kind to illustrate something peculiar:

- .ei = attitudinal: complex propositional emotion: obligation - freedom.

So, quite easy: I have to give the apple away is mi dunda .ei lo plise ku, right? It is, actually! When you think about it, that's weird. Why is it that all the other attitudinals we have seen so far expresses the speaker's feeling about the bridi, but this one actually changes what the bridi means? Surely, by saying I have to give the apple away, we say nothing about whether the apple actually is being given away. If I had used ui, however, I would actually have stated that I gave the apple away, and that I was happy about it. What's that all about?

This issue, exactly how attitudinals change the conditions on which a bridi is true, is a subject of a minor debate. The official, textbook rule, which probably won't be changed, is that there is a distinction between pure emotions and propositional emotions. Only propostional emotions can change the truth conditions, while pure emotions cannot. In order to express a propositional emotional attitudinal without changing the truth value of the bridi, you can just separate it from the bridi with .i. There is also a word for explicitly conserving or changing the truth conditions of a bridi:

- da'i = attitudinal: discursive: supposing - in fact

Saying da'i in a bridi changes the truth conditions to hypothetical, which is the default using propositional attitudinals. Saying da'i nai changes the truth condition to the normal, which is default using pure attitudinals.

So, what's two ways of saying I give the apple away? (and feel obligation about it)

Answer: mi dunda lo plise ku .i .ei and mi dunda da'i nai .ei lo plise ku

The feeling of an attitudinal can be subscribed to someone else using dai. Usually in ordinary speech, the attitudinal is subscribed to the listener, but it doesn't have to be so. Also, because the word is glossed empathy (feeling others emotions), some Lojbanists mistakenly think that the speaker must share the emotion being subscribed to others.

- dai = attitudinal modifier: empathy (subscribes attitudinal to someone else, unspecified)

Example: .u'i .oi dai citka ti - Ha ha, this was eaten! That must have hurt!

- .u'i = attitudinal: simple pure emotion: amusement - weariness

What often used phrase could .oi .u'i dai mean?

Answer: Ouch, very funny.

And another one to test your knowledge: Try to translate He was sorry he sold a home (remembering, that tense is implied and need not be specified. Also, he could be obvious from context)

Answer: u'u dai vecnu lo zdani ku

Lastly, the intensity of an attitudinal can be specified using certain words. These can be used after an attitudinal, or an attitudinal with nai or cu'i suffixed. It's less clear what happens when you attach it to other words, like a selbri, but it's mostly understood as intensifying or weakening the selbri in some unspecified way:

| Modifying word | Intensity |

|---|---|

| cai | Extreme |

| sai | Strong |

| (none) | Unspecified (medium) |

| ru'e | Weak |

What emotion is expressed using .u'i nai sai ?

Answer: Strong weariness

And how would you express that you are mildly remorseless?

Answer: .u'u cu'i ru'e

Lesson 5: Reordering places with SE

Before we venture into the territory of more complex constructs, you should learn another mechanism for reordering the sumti of a selbri. This, as we will show, is very useful for making description-like sumti (the kind of sumti with lo).

Consider the sentence I eat a gift, which might be appropriate if that gift is an apple. To translate this, it would seem natural to look up a selbri meaning gift before continuing. However, if one looks carefully at the definition of dunda, x1 gives x2 to x3, one realizes that the x2 of dunda is something given – a gift.

So, to express that sentence, we can't say mi citka lo dunda ku, because lo dunda ku would be the x1 of dunda, which is a donor of a gift. Cannibalism aside, we don't want to say that. What we want is a way to extract the x2 of a selbri.

This is one example where it is useful to use the word se. What se does is to modify a selbri such that the x1 and x2 of that selbri trade places. The construct of se + selbri is on its own considered one selbri. Let's try with an ordinary sentence:

| ti se fanva mi = mi fanva ti This is translated by me (= I translate this). [literally] |

- fanva = x1 translates x2 to language x3 from language x4 with result of translation x5

Often, but not always, bridi with se-constructs are translated to sentences with the passive voice, since the x1 is often the object taking action.

se has its own family of words. All of them swap a different place with the x1.

| se | swap x1 and x2 |

|---|---|

| te | swap x1 and x3 |

| ve | swap x1 and x4 |

| xe | swap x1 and x5 |

Note that s, t, v, and x are consecutive consonants in the Lojban alphabet.

So: Using this knowledge, what would ti xe fanva ti mean?

Answer: This is a translation of this (or fanva ti fu ti)

se and its family can of course be combined with fa and its family. The result can be very confusing indeed, if you wish to make it so:

- klama = x1 travels/goes to x2 from x3 via x4 using x5 as transportation device

fo lo zdani ku te klama fe do ti fa mi = mi te klama do ti lo zdani ku and since te exchanges x1 and x3: = ti klama do mi lo zdani ku = This travels to you from me via a home.

Of course, no one would make such a sentence except to confuse people, or cruelly to test their understanding of Lojban grammar.

And thus, we have come to the point where we can say I eat a gift.. Simply exchange the sumti places of dunda to get the gift to be x1, then extract this new x1 with lo...ku. So, how would you say it?

One (possible) answer: mi citka lo se dunda ku

This shows one of the many uses for se and its family.

Lesson 6: Abstractions

So far we have only expressed single sentences one at a time. To express more complex things, however, you often need subordinate sentences. Luckily, these are much easier in Lojban than what one would expect.

We can begin with an example to demonstrate this:

I am happy that you are my friend.

Here, the main bridi is I am happy that X., and the sub-bridi is You are my friend. Looking at the definition for happy, which is gleki

- gleki = x1 is happy about x2 (event/state)

we can see that the x2 needs to be an event or a state. This is natural, because one cannot be happy about an object in itself, only about some state the object is in. But alas! Only bridi can express a state or an event, and only sumti can fill the x2 of gleki!

As you might have guessed, there is a solution. The words su'u...kei is a generic convert bridi to selbri function, and works just like lo…ku.

- su'u = x1 is an abstraction of {bridi} of type x2

- kei = end abstraction

Example:

| melbi su'u dansu kei Beautiful dancing/Beautiful dance |

- melbi = x1 is beautiful to x2.

- dansu = x1 dances to accompaniment/music/rhythm x2.

It's usually hard to find good uses of a bridi as a selbri. However, since su'u BRIDI kei is a selbri, one can convert it to a sumti using lo...ku.

Now we have the equipment to express I am happy that you are my friend. Try it out!

- pendo = x1 is a friend of x2

Answer: mi gleki lo su'u do pendo mi kei ku

However, su'u…kei does not see much use. People prefer to use the more specific words nu…kei and du'u…kei. They work the same way, but mean something different. nu…kei treats the bridi in between as an event or state, and du'u…kei treats it as an abstract bridi, for expressing things like ideas, thoughts or truths. All these words (except kei) are called abstractors. There are many of them, and only few are used much. su'u…kei is a general abstractor, and will work in all cases.

- nu = x1 is an event of (bridi)

- du'u = x1 is the predication of (bridi), as expressed in sentence x2

Use nu to say I'm happy about talking to you.

- tavla = x1 talks to x2 about subject x3 in language x4.

Answer: mi gleki lo nu tavla do kei ku (notice both the English and the Lojban is vague as to who is doing the talking).

Other important abstractors include: ka...kei (property/aspect abstraction), si'o...kei (concept/idea abstraction), ni...kei (quantity abstraction) among others. The meanings of these is a complicated matter, and will be discussed much later, in lesson twenty-nine. For now, you'll have to do without them.

It is important to notice that some abstractors have several sumti places. As an example, du'u can be mentioned. du'u is defined:

- du'u = abstractor. x1 is the predicate/bridi of (bridi) expressed in sentence x2.

The other sumti places besides x1 is rarely used, but lo se du'u (bridi) kei ku is sometimes used as a sumti for indirect quotation: I said that I was given a dog can be written mi cusku lo se du'u mi te dunda lo gerku ku kei ku, if you will pardon the weird example.

- cusku = x1 expresses x2 to x3 through medium x4

- gerku = x1 is a dog of race x2

Lesson 7: NOI

While we're at it, there's another type of subordinate bridi. These are called relative clauses. They are sentences which add some description to a sumti. Indeed, the "which" in the previous sentence marked the beginning of a relative clause in English describing relative clauses. In Lojban, they come in two flavors, and it might be worth distinguishing the two kinds before learning how to express them.

The two kinds are called restrictive and non-restrictive (or incidential) relative clauses. An example would be good here:

My brother, who is two meters tall, is a politician.

This can be understood in two ways. I could have several brothers, in which case saying he is two meters tall will let you know which brother I am talking about. Or I might have only one brother, in which case I am simply giving you additional information.

In English as well as Lojban we distinguish between these two kinds – the first interpretation is restrictive (since it helps restrict the possible brothers I might be talking about), the second non-restrictive. When speaking English, context and tone of voice (or in written English, punctuation) helps us distinguish between these two, but not so in Lojban. Lojban uses the constructs poi…ku'o and noi…ku'o for restrictive and non-restrictive relative clauses, respectively.

Let's have a Lojbanic example, which can also explain our strange gift-eating behavior in the example in lesson five:

- noi = begin non-restrictive relative clause (can only attach to sumti)

- poi = begin restrictive relative clause (can only attach to sumti)

- ku'o = end relative clause

| mi citka lo se dunda ku poi plise ku'o I eat the gift that (something is) an apple. |

Here the poi…ku'o is placed just after lo se dunda ku, so it applies to the gift. To be strict, the relative clause does not specify what it is, which is an apple, but given the context we can safely assume that it means that the gift is an apple. If we want to be absolutely sure that it indeed was the gift that was an apple, we use the word ke'a, which is a sumka'i (a Lojban pronoun, more on them later) representing the sumti which the relative clause is attached to. ke'a is often omitted for brevity when it would be in the x1 place of the relative clause.

- ke'a = sumka'i; refers to the sumti, to which the relative clause it attached.

| ui mi citka lo se dunda ku poi ke'a plise ku'o Yay, I eat the gift that is an apple. |

To underline the difference between restrictive and non-restrictive relative clauses, here's another example:

- lojbo = x1 reflects Lojbanic culture/community is aspect x2; x1 is Lojbanic.

| mi noi lojbo ku'o fanva fo lo lojbo ku I, who am a Lojbanic, translate from some Lojbanic language. |

Here, there is not multiple things which mi could refer to, and the fact that I am lojbanic is merely additional information not needed to identify me. Therefore noi…ku'o is appropriate.

See if you can translate this:

I flirt with the man who is beautiful/handsome.

- nanmu = x1 is a man

- melbi = x1 is beautiful to x2 in aspect (ka) x3 by standard x4

- cinjikca = x1 flirts/courts x2, exhibiting sexuality x3 by standard x4

Answer: mi cinjikca lo nanmu ku poi (ke'a) melbi ku'o

On a more technical side note, it might be useful to know that lo (selbri) ku is often seen defined as zo'e noi ke'a (selbri) ku'o.

Besides, it is also possible to connect two or more relative clauses to the same sumti, by using the relative clause joiner zi'e. It's syntax is "sumti + relative clause + zi'e + relative clause (+ zi'e + relative clause (...))". Here is an example:

- penmi = x1 meets/encounters x2 at/in location x3

- dasni = x1 wears/is robed/garbed in x2 as a garment of type x3

| mi tavla lo nanmu ku poi do penmi ke'a ku'o zi'e noi dasni lo xunre ku ku'o I talked to the man that you met and which (incidentally) was dressed in red. ... and which wears something red... [literally] |

Lesson 8: Terminator elision

| je'u mi djica lo nu le merko poi tunba mi vau ku'o ku jimpe lo du'u mi na nelci lo nu ri darxi mi vau kei ku vau kei ku vau kei ku vau I do wish the American, who is my sibling, would understand that I don't like that he hits me. |

Regardless of whether the above sentence is being understood, (it shouldn't, as it contains words we have not covered in these lessons yet) one thing stands out: As more complex Lojban structures are learned, more and more of the sentences get filled with ku, kei, ku'o and other of those words which by themselves carry no meaning.

The function of all these words is to signal the end of a certain grammatical construct, like for instance convert selbri to sumti in the case of ku. The English word for this kind of word is terminator, the Lojban word is famyma'o. They are underlined in the example above.

Note: The vau in the above example are the famyma'o for end bridi. There is a good reason you have not yet seen it, stay tuned.

- vau = famyma'o: terminates bridi.

In most spoken and written Lojban, most famyma'o are skipped (elided). This greatly saves syllables in speech and space in writing, however, one must always be careful when eliding famyma'o. In the simple example lo merko ku klama, removing the famyma'o ku would yield lo merko klama, which is a single sumti made from the tanru merko klama. Thus, it means an American traveler instead of an American travels. famyma'o elision can lead to very wrong results if done incorrectly, which is why you haven't learned about it until now.

The rule for when famyma'o can be elided is very simple, at least in theory: You can elide a famyma'o, if and only if doing so does not change the grammatical constructs in the sentence. In other words, a construct extends as far right as possible, until either its famyma'o or another word not allowed in the construct appears.

Most famyma'o can be safely elided at the end of the bridi. Exceptions are the obvious ones like end quote-famyma'o and end bridi grouping-famyma'o. This is why vau is almost never used – simply beginning a new bridi with .i will almost always terminate the preceding bridi anyway. It has one frequent use, however. Since attitudinals always apply to the preceding word, applying it to a famyma'o applies it to the entire construct which is terminated. Using vau, one can then use attitudinals afterthought and apply them to the entire bridi:

| za'a do dunda lo zdani {ku} lo prenu {ku}... vau .i'e I see that you give a home to a person... I approve! |

- prenu = x1 is a person; x1 has a personality.

Knowing the basic rules for famyma'o elision, we can thus return to the original sentence and begin removing famyma'o:

| je'u mi djica lo nu le merko poi tunba mi vau ku'o ku jimpe lo du'u mi na nelci lo nu ri darxi mi vau kei ku vau kei ku vau kei ku vau |

We can see that the first vau is grammatically unnecessary, because the next non-famyma'o-word is jimpe, which is a selbri. Since there can only be one selbri per bridi, vau is not needed. Since jimpe as a selbri cannot be in the relative clause either (only one bridi in a clause, only one selbri in each bridi), we can elide ku'o. Likewise, jimpe cannot be a second selbri inside the construct le merko poi{...}, so the ku is not needed either. Furthermore, all the famyma'o at the very end of the sentence can be elided too, since beginning a new bridi will terminate all of these constructs anyway.

We then end up with:

| je'u mi djica lo nu le merko poi tunba mi jimpe lo du'u mi na nelci lo nu ri darxi mi |

with no famyma'o at all!

When eliding famyma'o, it is a good idea to be acquainted with cu. cu is one of those words which can make your (Lojbanic) life a lot easier. What it does is to separate any previous sumti from the selbri. One could say that it defines the next word to be a selbri, and terminates exactly as much as it needs to in order to do that.

- cu = elidable marker: separates selbri from preceding sumti, allows preceding famyma'o elision.

- prami = x1 loves x2

| lo su'u do cusku lo se du'u do prami mi vau kei ku vau kei ku se djica mi = lo su'u do cusku lo se du'u do prami mi cu se djica mi That you say that you love me is desired by be = I wish you said you loved me. |

Note: cu is not a famyma'o, because it is not tied to one specific construct. But it can be used to elide other famyma'o.

One of the greatest strengths of cu is that it quickly becomes easy to intuitively understand. By itself it means nothing, but it reveals the structure of Lojban expressions by identifying the core selbri. In the original example with the violent American brother, using cu before jimpe does not change the meaning of the sentence in any way, but might make it easier to read.

In the following couple of lessons, cu will be used when necessary, and all famyma'o elided if possible. The elided famyma'o will be encased in curly brackets, as shown below. Try to translate it!

.a'o do noi ke'a lojbo .o'a dai {ku'o} cu jimpe lo du'u lo famyma'o {ku} cu vajni {vau} {kei} {ku} {vau}

- vajni = x1 is important to x2 for reason x3

- jimpe = x1 understands that x2 (du'u-abstraction) is true about x3

- a'o = attitudinal: simple propositional emotion: Hope - despair

- o'a = attitudinal: simple propositional emotion: pride - modesty/humility - shame

- dai = attitudinal modifier: Empathy (subscribes attitudinal to someone else, unspecified)

What do I state?

Answer: I hope that you, a proud Lojbanist, understands that famyma'o are important

Fun side note: Most people well-versed in famyma'o-elision do it so instinctively that they often must be reminded how important understanding famyma'o are to the understanding of Lojban. Therefore, each Tuesday have been designated Terminator Day or famyma'o djedi on the Lojban IRC chatroom. During Terminator Day, many people try (and often fail) to remember writing out all famyma'o with some very verbose conversations as a result.

Lesson 9: sumtcita

So far we have been doing pretty well with the selbri we have had at hand. However, there is a finite amount of defined selbri out there, and in many cases the sumti places are not useful for what we had in mind. What if, say, i want to say that I am translating using a computer? There is no place in the structure of fanva to specify what tool I translate with, since, most of the time, that is not necessary. Not to worry, this lesson is on how to add additional sumti places to the selbri.

The most basic way to add sumti places are with fi'o SELBRI fe'u (yes, another example of a famyma'o, fe'u. It's almost never necessary, so this might be the last time you ever see it.)

In between these two words goes a selbri, and like lo SELBRI ku, fi'o SELBRI fe'u extracts the x1 of the selbri put into it. However, with fi'o SELBRI fe'u, the selbri place is converted, not to a sumti, but to a sumtcita, meaning sumti-label, with the place structure of the x1 of the selbri it converted. This sumtcita then absorbs the next sumti. One could say that using a sumtcita, you import a sumti place from another selbri, and add it to the bridi being said.

Note: Sometimes, especially in older texts, the term tag or modal is used for sumtcita. Ignore those puny English expressions. We teach proper Lojban here.

While it is hard to grasp the process from reading about it, an example can perhaps show its actual simplicity:

- skami = x1 is a computer for purpose x2

- pilno = x1 uses x2 as a tool for doing x3

mi fanva ti fi'o se pilno {fe'u} lo skami {ku}{vau} - I translate this with a computer The x2 of pilno, which is the x1 of se pilno is a place structure for a tool being used by someone. This place structure is captured by fi'o SELBRI fe'u, added to the main selbri, and then filled by lo skami. The idea of sumtcita is sometimes expressed in English using the following translation:

I translate this with-tool: A computer

A sumtcita can only absorb one sumti, which is always the following one. Alternatively, one can use the sumtcita construct by itself without sumti. In this case you need to put it either before the selbri or terminate it with ku. In such case one can think as if the sumtcita has the word zo'e as the sumti.

- zukte = x1 is a volitional entity carrying out action x2 for purpose x3

- zarci = x1 is a market/store/exchange/shop(s) selling/trading (for) x2, operated by/with participants x3

fi'o zukte {fe'u} ku lo prenu {ku} cu klama lo zarci {ku}{vau} - By their own volition, a person is going to the store

Note that there is ku in fi'o zukte {fe'u} ku. Without it the sumtcita would have absorbed lo prenu {ku} and we don't want that.

We can say the same in other words:

fi'o zukte {fe'u} zo'e lo prenu {ku} cu klama lo zarci {ku}{vau}

lo prenu {ku} cu fi'o zukte fe'u klama lo zarci {ku}{vau}

retaining the meaning.

What does mi jimpe fi lo skami fi'o se tavla {fe'u} mi state?

Answer: I understand something about computers, spoken to me

Putting the sumtcita right in front of the selbri also makes it self-terminate, since sumtcita only can absorb sumti, and not selbri. This fact will be of importance in next lesson, as you will see.

Actually, fi'o is not used very often despite its flexibility. What IS used very often, though, are BAI. BAI is a class of Lojban words, which in themselves act as sumtcita. An example of this is zu'e, the BAI for zukte. Grammatically, zu'e is the same as fi'o zukte fe'u. Thus, the above example could be reduced to:

zu'e ku lo prenu {ku} cu klama lo zarci {ku}{vau}. There exist something like 60 BAI, and a lot of these are very useful indeed. Furtermore, BAI can also be converted with se and friends, meaning that se zu'e is equal to fi'o se zukte fe'u, which results in a great deal more BAI.

Lesson 10: PU, FAhA, ZI, VA, ZEhA, VEhA

How unfamiliar a language like Lojban must seem to an English-speaker, when one can read through nine lessons of Lojban grammar without meeting a tense once! This is because, unlike many natural languages (most Indo-European ones, for instance), all tenses in Lojban are optional. Saying mi citka lo cirla {ku} can mean I eat cheese or I ate the cheese or I always eat cheese or In a moment, i will have just finished eating cheese. Context resolves what is correct, and in most conversation, tenses are not needed at all. However, when it's needed it's needed, and it must be taught. Furthermore, Lojban tenses are unusual because they treat time and space fundamentally the same - saying that I worked a long time ago is not grammatically different than saying I work far away to the north.

Like many other languages, the Lojban tense system is perhaps the most difficult part of the language. Unlike many other languages though, it's perfectly regular and makes sense. So fear not, for it will not involve sweating to learn how to modify the selbri or anything silly like that.

No, in the Lojban tense system, all tenses are sumtcita, which we have conveniently just made ourselves familiar with. Okay okay, technically, tenses are slightly different from other sumtcita, but this difference is almost insignificant, and won't be explained until later. In most aspects they are like all other sumtcita; they are terminated by ku, making it explicit that PU is terminated by ku.

There are many different kinds of tense-sumtcita, so let's start at the ones most familiar to English-speakers.

- pu = sumtcita: before {sumti}

- ca = sumtcita: at the same time as {sumti}

- ba = sumtcita: after {sumti}

These are like the English concepts before, now and after. In actuality though, one could argue that two point-like events can never occur exactly simultaneously, rendering ca useless. But ca extends slightly into the past and the future, meaning just about now. This is because human beings don't perceive time in a perfectly logical way, and the Lojban tense system reflects that.

Side note: It was actually suggested making the Lojban tense system relativistic. That idea, however, was dropped, because it is counter-intuitive, and would mean that to learn Lojban, one would have to learn the theory of relativity first.

So, how would you say I express this (pointing to a paper) after I came to this place?

Answer: mi cusku ti ba lo nu mi klama ti {vau} {kei} {ku} {vau}

Usually when speaking, we do not need to specify which event in the past this action is relative to. In: I gave a computer away, we can assume that the action happened relative to now, and thus we can elide the sumti of the sumtcita, because it's obvious:

pu ku mi dunda lo skami {ku} {vau} or

mi dunda lo skami {ku} pu {ku} {vau} or, more commonly

mi pu {ku} dunda lo skami {ku} {vau}. The sumti which fills the sumtcita is an implied zo'e, which is almost always understood as relative to the speaker's time and place (this is especially important when speaking about left and right). If speaking about some events that happened some other time than the present, it is sometimes assumed that all tenses are relative to that event which is being spoken about. In order to clarify that all tenses are relative to the speaker's current position, the word nau can be used at any time. Another word, ki marks a tense which is then considered the new standard. That will be taught way later.

- nau = updates temporal and spacial frame of reference to the speaker's current here and now.

- gugde = x1 is the country of people x2 with land/territory x3

Also note that mi pu {ku} klama lo merko gugde {ku} {vau}, I went to America, does not imply that I'm not still traveling to USA, only that it was also true some time in the past, for instance five minutes ago.

As mentioned, spacial and temporal time tenses are very much alike. Contrast the previous three time tenses with these four spacial tenses:

- zu'a = sumtcita: left of {sumti}

- ca'u = sumtcita: in front of {sumti}

- ri'u = sumtcita: right of {sumti}

- bu'u = sumtcita: at the same place as {sumti} (spacial equivalent of ca)

- .o'o = attitudinal: complex pure emotion: patience - tolerance - anger

What would .o'o nai ri'u ku lo prenu {ku} cu darxi lo gerku {ku} pu {ku} {vau} mean?

- darxi = x1 beats/hits x2 with instrument x3 at locus x4

Answer: {anger!} To the right (of something, probably me) and in the past (of some event), something is an event of a person beating a dog. or A man hit a dog to my right!

If there are several tense sumtcita in one bridi, the rule is that you read them from left to right, thinking it as a so called imaginary journey, Where you begin at an implied point in time and space (default: the speaker's now and here), and then follow the sumtcita one at a time from left to right.

Example

mi pu {ku} ba {ku} jimpe fi lo lojbo famyma'o {ku} {vau} = At some time in the past, I will be about to know about famyma'os.

mi ba {ku} pu {ku} jimpe fi lo lojbo famyma'o {ku} {vau} At some point in the future, I will have understood about famyma'os.

Since we do not specify the amount of time we move back or forth, the understanding could in both cases happen in the future or the past of the point of reference.

Also, if spacial and temporal tenses are mixed, the rule is to always put temporal before spacial.

Suppose we want to specify that the a man hit a dog just a minute ago. The words zi, za and zu specifies a short, unspecified (presumably medium) and long distance in time. Notice the vowel order i, a and u. This order appears again and again in Lojban, and might be worth to memorize. Short and long in are always context dependent, relative and subjective. Two hundred years is a short time for a species to evolve, but a long time to wait for the bus.

- zi = sumtcita: Ocurring the small distance of {sumti} in time from point of reference

- za = sumtcita: Ocurring the unspecified(medium) distance of {sumti} in time from point of reference

- zu = sumtcita: Ocurring the far distance of {sumti} in time from the point of reference

Similarly, spacial distance is marked by vi, va and vu for short, unspecified (medium) and long distance in space.

- vi = sumtcita: Ocurring the small distance of {sumti} in space from point of reference

- va = sumtcita: Ocurring the unspecified(medium) distance of {sumti} in space from point of reference

- vu = sumtcita: Ocurring the far distance of {sumti} in space from the point of reference

- gunka = x1 works at x2 with objective x3

Translate: ba {ku} za ku mi vu {ku} gunka {vau}

Answer: Some time in the future, I will work a place far away

Note: People rarely use zi, za or zu without a pu or ba in front of it. This is because most people always need to specify past or future in their native language. When you think about it Lojbanically, most of the time the time-direction is obvious, and the pu or ba superfluous!

The order in which direction-sumtcita and distance-sumtcita are said makes a difference. Remember that the meanings of several tense words placed together are pictured by an imaginary journey reading from left to right. Thus pu zu is a long time ago while zu pu is in the past of some point in time which is a long time toward the future or the past of now. In the first example, pu shows that we begin in the past, zu then that it is a long time backwards. In the second example, zu shows that we begin at some point far away in time from now, pu then, that we move backwards from that point. Thus pu zu is always in the past. zu pu could be in the future. The fact that these time tenses combine in this way is one of the differences between tense sumtcita and other sumtcita. The meanings of other sumtcita are not altered by the presence of additional sumtcita in a bridi.

As briefly implied earlier, all these constructs basically treat bridi as if they were point-like in time and space. In actuality, most events play out over a span of time and space. In the following few paragraphs, we will learn how to specify intervals of time and space.

- ze'i = sumtcita: spanning over the short time of {sumti}

- ze'a = sumtcita: spanning over the unspecified (medium) time of {sumti}

- ze'u = sumtcita: spanning over the long time of {sumti}

- ve'i = sumtcita: spanning over the short space of {sumti}

- ve'a = sumtcita: spanning over the unspecified (medium) space of {sumti}

- ve'u = sumtcita: spanning over the long space of {sumti}

Six words at a time, I know, but remembering the vowel sequence and the similarity of the initial letter z for temporal tenses and v for spacial tenses might help the memorizing.

- .oi = attitudinal: pain - pleasure

Translate: .oi dai do ve'u {ku} klama lo dotco gugde {ku} ze'u {ku} {vau}

Answer: Ouch, you spend a long time traveling a long space to Germany

ze'u and its brothers also combine with other tenses to form compound tenses. The rule for ze'u and the others are that any tenses preceding it marks an endpoint of the process (relative to the point of reference) and any tenses coming after it marks the other endpoint relative to the first. This should be demonstrated with a couple of examples:

.o'ocu'i do citka pu {ku} ze'u {ku} ba {ku} zu {ku} {vau} - {tolerance} you eat beginning in the past and for a long time ending at some point far into the future of when you started or Hmpf, you ate for a long time. One can also contrast do ca {ku} ze'i {ku} pu {ku} klama {vau} with do pu {ku} ze'i {ku} ca {ku} klama {vau}. The first event of traveling has one endpoint in the present and extends a little towards the past, while the second event has one endpoint in the past and extends only to the present (that is, slighty into the past or future) of that endpoint.

- jmive = x1 is alive by standard x2

What does ui mi pu {ku} zi {ku} ze'u {ku} jmive {vau} express?

Answer: {happiness!} I live from a little into the past and a long way towards the future or past (obviously the future, in this case) of that event or I am young, and have most of my life ahead of me :)

Just to underline the similarity with spacial tenses, let's have another example, this time with spacial tenses:

- .u'e = attitudinal: wonder - commonplace

.u'e za'a bu'u {ku} ve'u {ku} ca'u {ku} zdani {vau} - What does it mean?

Answer: {wonder} {I observe!} Extending a long space from here to my front is a home. or Wow, this home extending ahead is huge!

Before we continue with this syntax-heavy tense system, i recommend spending at least ten minutes doing something which doesn't occupy your brain in order to let the information sink in. Sing a song or eat a cookie very slowly - whatever, as long as you let your mind rest.

Lesson 11: ZAhO

Though we won't go through all Lojban tense constructs for now, there is one other kind of tense that I think should be taught now. These are called event contours, and represent a very different way of viewing tenses that we have seen so far. So let's get to it:

Using the tenses we have learned so far, we can imagine an indefinite time line, and we then place events on that line relative to the now. With event contours, however, we view each event as a process, which has certain stages: A time before it unfolds, a time when it begins, a time when it is in process, a time when it ends, and a time after it has ended. Event contours then tells us which part of the event's process was happening during the time specified by the other tenses. We need a couple of tenses first:

- pu'o = sumtcita: event contour: Bridi has not yet happened during {sumti}

- ca'o = sumtcita: event contour: Bridi is in process during {sumti}

- ba'o = sumtcita: event contour: The process of bridi has ended during {sumti}

This needs to be demonstrated by some examples. What's ui mi pu'o {ku} se zdani {vau} mean?

Answer: Yay, I'm about to have a home.

But hey, you ask, why not just say ui mi ba {ku} se zdani {vau} and even save a syllable? Because, remember, saying that you will have a home in the future says nothing about whether you have a home now. Using pu'o, though, you say that you are now in the past of the process of you having a home, which means that you don't have one now.

Note, by the way, that mi ba {ku} se zdani {vau} is similar to mi pu'o {ku} se zdani {vau}, and likewise with ba'o and pu. Why do they seem reversed? Because event contours view the present as seen from the viewpoint of the process, whereas the other tenses view events seen from the present.

Often, event contours are more precise that other kind of tenses. Even more clarity is achieved by combining several tenses: .a'o mi ba {ku} zi {ku} ba'o {ku} gunka {vau} - I hope I've soon finished working.

In Lojban, we also operate with an event's natural beginning and its natural end. The term natural is highly subjective in this sense, and the natural end refers to the point in the process where it should end. You can say about a late train, for instance, that its process of reaching you is now extending beyond its natural end. An undercooked, but served meal, similarly, is being eaten before that process' natural beginning. The event contours used in these examples are as follows:

- za'o = sumtcita: event contour: Bridi is in process beyond its natural end during {sumti}

- xa'o = sumtcita: event contour: Bridi is immaturely in process during {sumti}

- cidja = x1 is food, which is edible for x2

Translate: .oi do citka za'o lo nu do ba'o {ku} u'e citka zo'e noi cidja do {vau} {ku'o} {vau} {kei} {ku}

Answer: Oy, you keep eating when you have finished, incredibly, eating something edible!

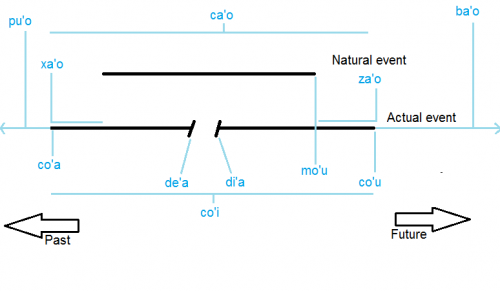

Image above: ZAhO tenses (event contours). All tenses above the line of the event signify stages covering an amount of time. All tenses below the event line signify stages which are point-like.

All of these tenses have been describing stages of a process which takes some time (as shown on the graph above; those tenses above the event like). But many of the event contours describes point like stages in the process, like its beginning. As is true of ca and bu'u, they actually extend slightly into the past and future of that point, and need not to be precise.

The two most important point-like event contours are:

- co'a = sumtcita: event contour: Bridi is at its beginning during {sumti}

- co'u = sumtcita: event contour: Bridi is at its ending during {sumti}

Furthermore, there is a point where the process is naturally complete, but not necessarily has ended yet:

- mo'u = sumtcita: event contour: Bridi is at its natural ending during {sumti}

Most of the time, though, processes actually end at their natural ending; this is what makes it natural. Trains are not usually late, and people usually retrain themselves to eat only edible food.

Since a process can be interrupted and resumed, these points have earned their own event contour also:

- de'a = sumtcita: event contour: Bridi is pausing during {sumti}

- di'a = sumtcita: event contour: Bridi is resuming during {sumti}

In fact, since jundi means x1 pays attention to x2, de'a jundi and di'a jundi are common Lojban ways of saying BRB and back. One could of course also say the event contours by themselves and hope the point gets across.

Finally, one can treat an entire event, from the beginning to the end as one single point using co'i:

- penmi = x1 meets x2 at location x3

mi pu {ku} zi {ku} co'i {ku} penmi lo dotco prenu {ku} {vau} - A little while ago, I was at the point in time where i met a German person

Lesson 12: Orders and questions

Phew, those two long lessons with syntax heavy Lojban gives the brain something to ponder about. Especially because it's so different from English. So let's turn to something a little lighter: Orders and questions.

What the... sit up and focus!

Since the way to express orders in English is to leave out the subject of the clause, why did you assume that it was you I was speaking to, and not ordering myself, or expressing the obligation someone else has? Because the English language understands that orders, by their very nature, are always directed towards the listener - the you, and so the subject is not necessary.

In Lojban, eliding the subject yields zo'e, so that possibility is sadly not open to us. Instead, we use the word ko, which is the imperative form of do. Grammatically and bridi-wise, it's equivalent to do, but it adds a layer of semantics, since it turns every statement with ko in it into an order. Do such that this sentence is true for you=ko! For the same reason we don't need the subject in English sentences, we don't need order-words derived from any other sumti than do.

How could you order one to go far away for a long time (using klama as the only selbri?)

Answer: ko ve'u ze'u klama

(.i za'a dai a'o mi ca co'u ciska lo famyma'o .i ko jimpe vau .ui) - work it out. Note that

- ciska = x1 writes text x2 on x3

Questions in Lojban are very easy to learn, and they come in two kinds: Fill in the blank, and true/false questions. Let's begin with the true-false question kind - that's pretty overcomeable, since it only involves one word, xu.

xu works like an attitudinal in the sense that it can go anywhere, and it applies to the preceding word (or construct). It then transforms the sentence into a question, asking whether it is true or not. In order to affirm, you simply repeat the bridi:

xu ze'u zdani do .i ze'u zdani mi, or you just repeat the the selbri, which is the bridi with all the sumti and tenses elided: zdani.

There is an even easier way to affirm using brika'i, but those are a tale for another time. To answer no or false, you simply answer with the bridi negated. That too, will be left for later, but we will return to question answering by then.

The other kind of question is fill in the blank. Here, you ask for the question word to be replaced for a construct, which makes the bridi correct. There are several of these words, depending on what you are asking about:

- ma = sumti question

- mo = selbri question

- xo = number question

- cu'e = tense question

As well as many others. To ask about a sumti, you then just place the question word where you want your answer: do dunda ma mi - asks for the x2 to be filled with a correct sumti. You give what to me?. The combination of sumtcita + ma is very useful indeed:

- mu'i = sumtcita: motivated by the abstraction of {sumti}

.oi do darxi mi mu'i ma - Oy, why do you hit me?!

Let's try another one. This time, you translate:

ui dai do ca ze'u pu mo

Answer: You're happy, what have you been doing all this long time until now? Technically, it could also mean what have you been?, but answering with ua nai li'a remna (Uh, human, obviously) is just being incredibly annoying on purpose.

Since tone of voice or sentence structure does not reveal whether a sentence is a question or not, one better not miss the question word. Therefore, since people tend to focus more on words in the beginning or at the end of sentences, it's usually worth the while to re-order the sentence so that the question words are at those places. If that is not feasable, pau is an attitudinal marking that the sentence is a question. Contrary, pau nai explicitly marks any question as being rhetorical.

While we are on the topic of questions, it's also appropriate to mention the word kau, which is a marker for indirect question. What's an indirect question, then? Well, take a look at the sentence: mi djuno lo du'u ma kau zdani do - I know what is your home.

- djuno = x1 knows fact(s) x2 are true about x3 by epistemology x4

One can think it as the answer to the question ma zdani do. More rarely, one can mark a non-question word with kau, in which case one still can imagine it as the answer to a question: mi jimpe lo du'u dunda ti kau do - I know what you have been given, it is this.

Lesson 13: Morphology and word classes

Back to more heavy (and interesting) stuff.

If you haven't already, I strongly suggest you find the Lojbanic recording called "Story Time with Uncle Robin", or listen to someone speak Lojban on Mumble, and then practice your pronunciation. Having an internal conversation in your head in Lojban is only good if it isn't with all the wrong sounds, and learning pronunciation from written text is hard. Therefore, this lesson will not be on the Lojban sounds, however important they might be, but a short introduction to the Lojban morphology.

What is morphology? The word is derived from Greek meaning the study of shapes, and in this context, we talk about how we make words from letters and sounds, as contrasted with syntax - how we make sentences with words. Lojban operates with different morphological word classes, which are all defined by their morphology. To make it all nice and systematic though, words with certain functions tend to be grouped by morphological classes, but exceptions may occur.

| Class | Meaning | Defined By | Typical Function |

|---|---|---|---|

| Words: | |||

| brivla | bridi-word | Among first 5 letters (excluding ‘ ) is a consonant cluster. Ends in vowel. | Acts as a selbri by default. Always has a place structure. |

| gismu | Root-word | 5 letters of the form CVCCV or CCVCV | One to five sumti places. Covers basic concepts. |

| Lujvo | Compound word. Derived from from lujvla, meaning complex word | Min. 6 letters. Made by stringing rafsi together with binding letters if necessary. | Covers more complex concepts than gismu. |

| zi'evla | Free-word | As brivla, but do not meet defining criteria of gismu or lujvo, ex: angeli | Covers unique concepts like names of places or organisms. |

| cmevla | Name-word | Beginning and ending with pause (full stop). Last sound/letter is a consonant. | Always acts as a name or as the content of a quote. |

| cmavo | Grammar-word. From cmavla, meaning small word | One consonant or zero, always at the beginning. Ends in a vowel. | Grammatical functions. Varies |

| Word-fragments: | |||

| rafsi | Affix | CCV, CVC, CV'V, -CVCCV, -CCVCV, CVCCy- or CCVCy- | Not actual words, but can be stringed together to form lujvo |

- cmevla are very easy to identify because they begin and end with a pause, signaled by a full stop in writing, and the last letter is a consonant. Cmevla have two functions: They can either act as a proper name, if prefixed by the article la (explained in next lesson), or they can act as the content of a quote. As previously stated, one can mark stress in the names by capitalizing the letters which are stressed. Examples of cmevla are: .io'AN. (Johan), .mat. (Matt) and .cumindzyn. (Xuming Zeng). Names which do not end in consonants have to have one added: .anas. (Anna), or removed: .an.

- brivla are called bridi-words because they by default are selbri, and therefore almost all Lojban words with a place structure are brivla. This has also given them the English nickname content-words. It's nearly impossible to say anything useful without brivla, and almost all words for concepts outside lojban grammar (and even most of the words for things in the language) are captured by brivla. As shown in the table, brivla has three subcategories:

- gismu are the root words of the language. Only about 1450 exist, and very few new ones are added. They cover the most basic concepts like circle, friend, tree and dream. Examples include zdani, pelxu and dunda

- lujvo are made by combining rafsi (see under rafsi), respresenting gismu. By combining rafsi, one narrows down the meaning of the word. lujvo are made by an elaborate algorithm, so making valid lujvo on the fly is near impossible, with few exceptions like selpa'i, from se prami, which can only have one definition. Instead, lujvo are made once, its place structure defined, and then that definition is made official by the dictionary. Examples include brivla (bridi-word), cinjikca (sexual-socializing = flirting) and cakcinki (shell-insect = beetle).

- zi'evla are made by making up words which fit the definition for brivla, but not for lujvo or gismu. They tend to cover concepts which it's hard to cover by lujvo, for instance names of species, nations or very cultural specific concepts. Examples include xanguke (South Korea) cidjrpitsa (pizza) or .angeli (angel).

- cmavo are small words with one or zero consonants. They tend to not signify anything in the exterior world, but to have only grammatical function. Exceptions occur, and it's debatable how much attitudinals exists for their grammatical function. Another weird example are the words of the class GOhA, which act as brivla. It is valid to type several cmavo in a row as one word, but in these lessons, that won't be done. By grouping certain cmavo in functional units, though, it is sometimes easier to read. Thus, uipuzuvukumi citka is valid, and is parsed and understood as ui pu zu vu ku mi citka. Like other Lojban words, one should (but need not always) place a full stop before any words beginning with a vowel.

- cmavo of the form xVV, CV'VV or V'VV are experimental, and are words which are not in the official language definition, but which have been added by Lojban users to respond to a certain need.

- rafsi are not Lojban words, and can never appear alone. However, several (more than one) rafsi combine to form lujvo. These must still live up to the brivla-definition, for instance lojban is invalid because it ends in a consonant (which makes it a cmevla), and ci'ekei is invalid because it does not contain a consonant cluster, and is thus read as two cmavo written as one word. Often, a 3-4 letter string is both a cmavo and a rafsi, like zu'e, which is both the BAI and the rafsi for zukte. Note that there is nowhere that both a cmavo and a rafsi would be grammatical, so these are not considered homophones. All gismu can double as (word-final) rafsi, if they are prefixed with another rafsi. The first four letter of a gismu suffixed with an "y" can also act as a rafsi, if they are suffixed with another rafsi. The vowel "y" can only appear in lujvo or cmevla. Valid rafsi letter combinations are: CVV, CV'V, CCV, CVCCy- CCVCy-, -CVCCV and -CCVCV.

Using what you know now, you should be able to answer the test I thus present:

Categorize each of the following words as cmevla (C), gismu (g), lujvo (l), zi'evla (z) or cmavo (c):

| A ) jai | G ) mumbl |

| B ) .irci | H ) .i'i |

| C ) bostu | I ) cu |

| D ) xelman | J ) plajva |

| E ) po'e | K ) danseke |

| F ) djisku | L ) .ertsa |

Answer: a-c, b-z, c-g, d-C, e-c, f-l, g-C, h-c, i-c, j-l, k-z, l-z. I left out the full stops before and after names in order not to make the task too easy. Note: some of these words, like bostu do not exist in the dictionary, but this is irrelevant. The morphology still makes it a gismu, so it's just an undefined gismu. Similarly with .ertsa

Lesson 14: Lojban sumti 1ː LE and LA